Future of Computing: Apple Intelligence, visionOS & Silicon

Future of Computing: How Apple Intelligence and visionOS are Redefining the Digital Frontier

The convergence of generative artificial intelligence, spatial computing, and a vertically integrated hardware ecosystem marks the beginning of a new epoch in personal computing. For decades, the industry has chased the ideal of a truly context-aware machine—one that doesn’t just execute commands but understands the nuances of user intent while respecting the absolute sanctity of personal data. Today, this vision is crystallizing through a sophisticated orchestration of software frameworks, custom-designed silicon, and a commitment to sustainable infrastructure. By examining the structural shifts in Apple Intelligence, the engineering hurdles overcome in visionOS, and the hardware breakthroughs in Apple Silicon, we can see the blueprint of a future where technology is both more immersive and more invisible.

This transition is not occurring in a vacuum. As we witness the emergence of these technologies, it is clear that how AI and robotics are redefining the global economy will be heavily influenced by the platforms that prioritize privacy-first intelligence. The move from centralized cloud processing to edge-computing-heavy architectures is fundamentally changing how businesses interact with consumer data and how individuals interact with their environment.

The Architectural Shift: From Discrete Models to Apple Intelligence

At the heart of this transformation is Apple Intelligence, a system-wide generative AI framework that departs from the industry standard of siloed, cloud-dependent chatbots. Historically, machine learning on mobile devices was limited to discrete tasks: identifying a face in a photo, transcribing a voice memo, or predicting the next word in a text message. Apple Intelligence moves beyond these “point solutions” by integrating Large Language Models (LLMs) and diffusion models directly into the core of the operating system.

The Philosophy of On-Device Processing

The architecture relies on a hybrid approach that prioritizes on-device processing. By running models locally on the Apple Neural Engine (ANE), the system ensures that the most sensitive data—emails, personal schedules, and private messages—never leaves the device. This is not merely a privacy feature; it is a performance necessity. Local processing eliminates the latency associated with server round-trips, allowing features like Writing Tools and notification prioritization to feel like instantaneous extensions of the user interface.

To achieve this, Apple has optimized the transformer architecture to run efficiently on mobile silicon. These models are quantized and compressed to maintain high accuracy while minimizing their memory footprint. For the developer community, the gateway to this intelligence is the “App Intents” framework. This represents a fundamental shift in how apps interact with the OS. Instead of users manually navigating through menus to perform a task, developers can define “intents” that allow Siri and the system’s orchestration engine to take actions on the user’s behalf. Developers looking to leverage these capabilities can find extensive guidance in the Apple Machine Learning Research archives, which detail the optimization of transformer models for edge devices.

Private Cloud Compute: Redefining Cloud Privacy

However, generative AI is computationally expensive. To handle more complex reasoning tasks without compromising privacy, the ecosystem introduces Private Cloud Compute (PCC). This is perhaps the most significant advancement in cloud security in the last decade. Unlike traditional cloud processing, where data is decrypted on a server for processing, PCC utilizes dedicated Apple silicon servers running a hardened, minimal operating system.

This environment is designed so that even Apple employees cannot access the data, and the software running on these servers is cryptographically verifiable by independent researchers. This “stateless” cloud ensures that once a task is completed, the data vanishes, effectively extending the privacy of the iPhone into the data center. By creating a semantic index of app data, the AI gains a holistic understanding of the user’s digital life without the trade-offs typical of traditional data harvesting models.

Spatial Computing: Engineering the “Infinite Canvas”

While generative AI provides the “brain” for the new ecosystem, spatial computing provides the “body.” The introduction of visionOS has shifted the paradigm from 2D screen-based interaction to a 3D volumetric experience. This is not merely an evolutionary step in augmented reality; it is a complete re-engineering of the human-computer interface.

The Mechanics of visionOS

The engineering of visionOS is built around the concept of the “infinite canvas.” Unlike a traditional monitor, which is constrained by physical borders, visionOS allows users to place windows, 3D volumes, and fully immersive “spaces” anywhere in their physical environment. This requires an extraordinary amount of computational overhead. To maintain the illusion that a digital window is physically present in a room, the system must account for lighting, shadows, and occlusion in real-time.

A critical component of this stability is the Real-Time Subsystem. To prevent the motion sickness often associated with head-mounted displays, the “photon-to-photon” latency—the time it takes for cameras to capture the world and display it on the internal screens—must be less than 12 milliseconds. This is achieved through the R1 chip, a specialized piece of silicon dedicated entirely to processing sensor data from 12 cameras, five sensors, and six microphones.

Spatial Personas and Co-presence

Furthermore, the social dimension of spatial computing is addressed through “Spatial Personas.” Using advanced machine learning, the system reconstructs a high-fidelity, 3D representation of the user’s face and hand movements. This allows for a sense of “co-presence” in professional and personal calls, where participants feel as though they are sitting in the same room. Developers can explore the technical requirements for building these shared experiences by visiting the Apple Developer: visionOS Documentation, which covers the intricacies of RealityKit and ARKit. These tools enable the creation of physics-based interactions that make digital objects behave as if they have real-world mass and texture.

The Silicon Foundation: Unified Memory and the Neural Engine

None of these software breakthroughs would be possible without the vertical integration of Apple Silicon. The transition to the M-series and A-series chips has redefined what is possible in small-form-factor devices. This hardware foundation is what allows for the seamless multitasking required in both spatial computing and generative AI environments.

Unified Memory Architecture (UMA)

The cornerstone of this hardware success is the Unified Memory Architecture (UMA). In a traditional PC, the CPU and GPU have separate memory pools, requiring data to be copied back and forth across a slow bus. Apple’s UMA allows the CPU, GPU, and Neural Engine to access the same high-bandwidth, low-latency memory simultaneously. This is particularly crucial for generative AI, where large model weights need to be accessed rapidly by the NPU. This architecture minimizes energy consumption and maximizes the throughput required for real-time video processing and AI inference.

The Evolution of the Neural Engine

The Apple Neural Engine (ANE) has seen exponential growth in its capabilities. The latest iterations, such as those found in the M4 and A18 Pro chips, deliver up to 38 Trillions of Operations Per Second (TOPS). This raw power is specifically tuned for the tensor operations required by deep learning. It includes dedicated hardware-level acceleration for the self-attention mechanisms of transformer models, which are the backbone of modern LLMs.

The shift to 3-nanometer process technology allows for unprecedented performance-per-watt. This efficiency is what enables the iPad Pro to remain incredibly thin while handling professional video editing, and what allows the Vision Pro to process a massive stream of sensor data without requiring a bulky, fan-heavy cooling system. By controlling the design of the silicon, the operating system, and the end-user devices, the entire stack is optimized for the specific demands of spatial computing and AI.

Infrastructure and Sustainability: The Apple 2030 Initiative

As the computational demands of AI and spatial computing grow, so does the responsibility to manage the environmental impact of this infrastructure. The “Apple 2030” initiative is a bold commitment to becoming carbon neutral across the entire business, including the global supply chain and the full product life cycle. This is a critical consideration for enterprises looking to align their digital transformation with environmental, social, and governance (ESG) goals.

Carbon Neutrality and Renewable Energy

This strategy is not merely about purchasing carbon offsets; it is an engineering challenge. The company has prioritized direct emissions reduction by transitioning to 100% renewable electricity for all corporate facilities and pressuring hundreds of global suppliers to do the same. This transition is essential as the power requirements for training and running AI models continue to scale. Data centers supporting Private Cloud Compute are designed to run on carbon-free energy, ensuring that the intelligent features of the OS do not leave a massive carbon footprint.

Material Science and the Circular Economy

Material science plays an equally important role. Apple has significantly increased its use of recycled content, aiming to eventually use only recycled or renewable materials in its products. As of the most recent data, over 20% of the materials used in the product lineup are from recycled sources. This includes 100% recycled cobalt in batteries—a critical component for high-performance mobile devices—and 100% recycled gold in the plating of multiple printed circuit boards. Detailed metrics on these efforts are available in the Apple Environmental Progress Reports, which outline the roadmap for eliminating plastics from packaging by 2025.

Beyond the physical hardware, Apple is investing in “nature-based solutions” through the Restore Fund. This initiative focuses on protecting and restoring forests and wetlands to remove residual carbon from the atmosphere. By integrating sustainability into the hardware design process, the company ensures that the next generation of computing does not come at the expense of the planet’s future.

Retail Evolution and the Future of Technical Support

The final piece of the ecosystem puzzle is the human element: how users learn to interact with these complex new technologies. As technology becomes more sophisticated, the workforce must adapt. We are seeing a parallel trend in the professional world, where modern recruitment: AI automation & human capital trends are reshaping how talent is acquired and trained to use these very tools.

High-Touch Retail Experiences

Apple’s retail strategy has evolved to become a hub for community education and high-touch technical support. The “Today at Apple” sessions have been reimagined to focus on the creative potential of generative AI and the productivity gains of spatial computing. In the retail environment, the Vision Pro presents a unique challenge. Unlike an iPhone, which can be picked up and used immediately, the Vision Pro requires a precise fit and a guided introduction to ensure the user understands the eye-and-gesture-based navigation system.

Consequently, Apple stores have been redesigned with dedicated “Spatial Computing Demo Zones,” where environmental lighting and seating are carefully controlled to optimize the headset’s tracking sensors. This physical interaction is a vital part of the onboarding process, bridging the gap between high-end engineering and consumer usability.

AI-Driven Support and Longevity

Technical support has also become more sophisticated. The “Service Toolkit 2” allows technicians to perform deep diagnostics using machine learning-driven tools that can identify hardware failures before they become catastrophic. Furthermore, the “Self Service Repair” program reflects a shift toward longevity. By providing genuine parts, specialized tools, and detailed manuals to customers and independent repair shops, the company is extending the lifespan of its devices, which aligns with its broader sustainability goals.

The Industrial and Enterprise Impact

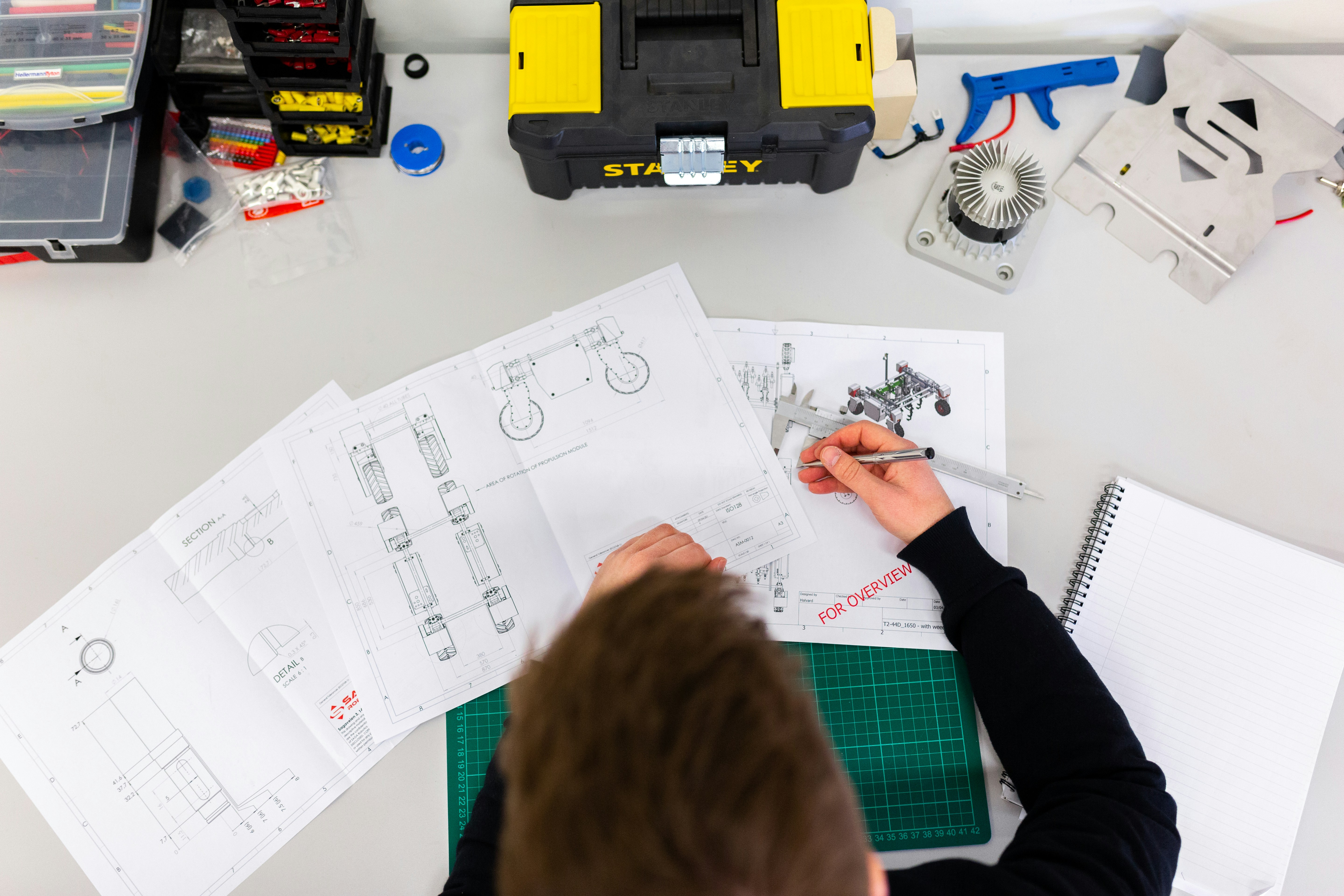

Beyond consumer use, the combination of visionOS and Apple Intelligence is set to revolutionize industrial workflows. In manufacturing, spatial computing allows for “digital twins” where engineers can overlay schematics onto physical machinery, identifying faults without disassembly. When combined with generative AI, the system can suggest repair protocols or predict maintenance needs based on real-time visual data.

In the medical field, surgeons are already utilizing visionOS to visualize 3D models of patient anatomy during pre-operative planning. The low latency of the R1 chip ensures that these visualizations remain rock-solid, providing a level of precision previously impossible with traditional monitors. As these tools become more integrated into professional workflows, they will create a surge in demand for workers skilled in spatial design and prompt engineering, further accelerating the trends seen in modern human capital management.

Conclusion: The Integrated Future

The integration of generative AI, spatial computing, and high-performance silicon represents more than just a series of product updates; it is a fundamental shift in the relationship between humans and machines. We are moving away from a world of “tools” that we use to perform tasks and toward a world of “intelligent environments” that anticipate our needs and expand our perceptions.

This ecosystem is built on a foundation of privacy and sustainability. By prioritizing on-device processing and Private Cloud Compute, the system ensures that the power of AI does not require the sacrifice of personal liberty. By committing to carbon neutrality and recycled materials, it ensures that the future of technology is compatible with the health of the environment.

As developers continue to build for visionOS and Apple Intelligence, the boundaries between the digital and physical worlds will continue to blur, creating a seamless experience that is as powerful as it is intuitive. The infrastructure is now in place for a decade of innovation that will redefine what we expect from our devices and how we interact with the world around us. The age of spatial intelligence has arrived, and its impact on the global economy, the environment, and our daily lives will be profound and lasting.